Falling Response Rates to USDA Crop Surveys: Why It Matters

USDA’s National Agricultural Statistics Service (NASS) conducts a series of surveys throughout the year to assess farmer planting decisions and production conditions. Among other things, those surveys use farmer responses to estimate crop acreage and yields and provide early information on likely production outcomes for various crops in the current crop year. Those estimates underlie USDA and private analysis that affect markets throughout the year. The public benefits of those surveys are notable, and the literature on those benefits was recently reviewed by the Council on Food, Agricultural and Resource Economics (C-FARE), which highlighted how public information on market prices and quantities helps improve market efficiency (Lusk, 2013).

Producers and other decision makers depend on the objective information and decision support tools that analysts and researchers develop from NASS producer survey data. NASS survey enumerators have long responded to producer questions about the value of USDA surveys by pointing out how accurate NASS data can lead to improved data for RMA crop insurance payments and revenue support programs; improved crop recommendations by local extension agents; improved information for local agribusiness planning; and improved information for individual producers’ marketing and future planting decisions (USDA-NASS, 2015). The quality of the information and analysis provided from NASS data, however, depends on a high level of producer participation in these surveys. As the number of respondents falls, the statistical reliability of estimates and forecasts decline and the value of NASS estimates for a host of other purposes declines as well. The purpose of this article is to examine the impact of response rates on county yield estimates for an important new farm program–the ARC-CO program.

NASS Survey Response Rates

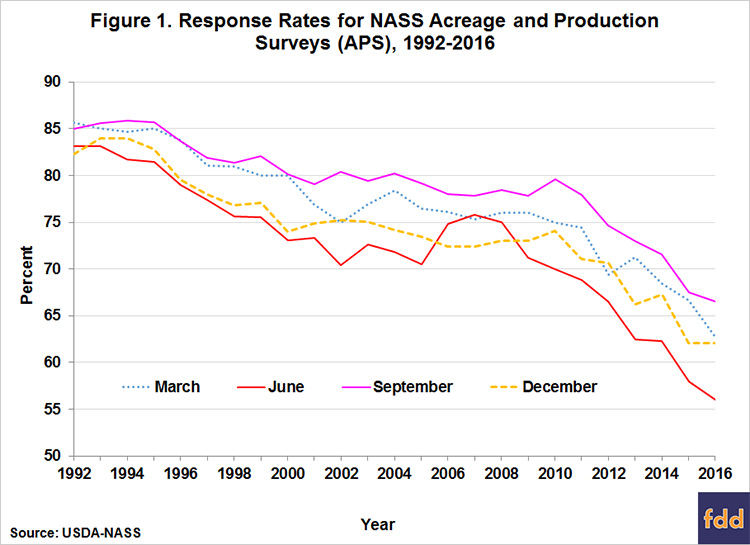

Response rates on NASS crop acreage and production surveys have been falling in recent decades (Ridolfo, Boone, and Dickey, 2013). From response rates of 80-85 percent in the early 1990s, rates have fallen below 60 percent in some cases (Figure 1). Of even greater concern, there appears to an acceleration in the decline in the last 5 years or so, suggesting the possibility that this decline reflects a long-term permanent change.

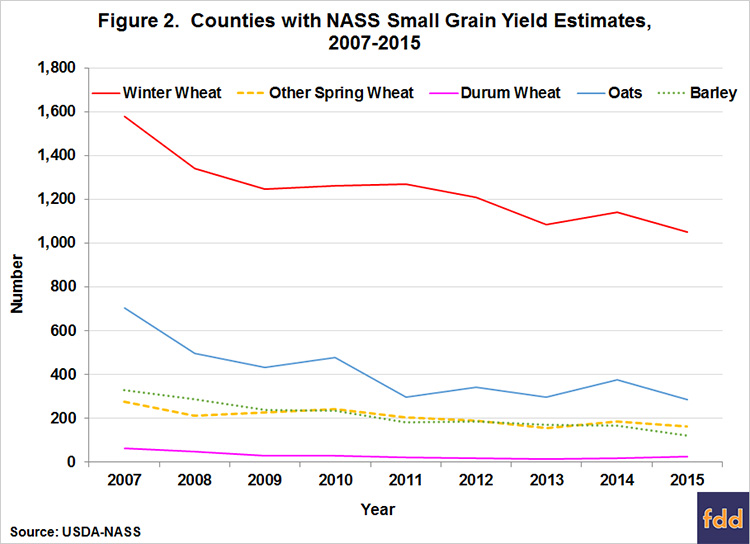

Reduced response rates can potentially introduce bias or error to the estimates released by USDA. For example bias may occur if higher yielding farms drop out. Reduced response will almost assuredly introduce error to the estimates making them noisier and randomly more inaccurate. This will be most noticeable in county estimates. The NASS area-sampling frame remains most accurate at the national level; response rates become increasingly important for estimates at the state and especially county levels. So while the quality of national and published state estimates is still high, falling response rates have led to decreases in the number of counties for which estimates can be published (Figure 2). Increasing sample sizes might help to increase the number of responses, but the cost of that solution can be prohibitive, and if the reasons behind low response rates are systemic, larger sample sizes will not necessarily counteract lower response rates.

Identifying the reasons for such a change might suggest possible solutions. Producers typically claim that they do not have enough time or that they are concerned about data privacy (McCarthy and Beckler, 2000). Anecdotally, other producers have said they spend too much time filling out USDA reports and that is why they do not want to spend time filling out another survey. However, research has not confirmed that response burden has contributed to the likelihood that a farmer will refuse to fill out a survey in the future (McCarthy, Beckler, and Qualey, 2006), although NASS analysis of non-response for the 2009 June Area Survey found that “would not take time/too busy” and “refuses on all surveys” accounted for more than a third (36%) of non-responses (Tran et al., 2011).[1]

Increasingly rapid declines in response rates in the current decade appear to be an acceleration in some of the trends affecting falling response rates across the range of government household surveys over the last 25 years (Meyer, Mok, and Sullivan, 2015). The difficulty in accessing households has increased steadily, often attributed to new telephone technologies like caller ID and replacement of land lines with cell phones. Over the same period, refusal rates have increased, and although refusals began to decline in the 2000s, the rate has begun to rise again (Ridolfo, Boone, and Dickey 2013). Given the steady increase in privacy technologies and changes in telephone use, these conditions seem unlikely to improve, although new technologies, such as on-line surveys and direct on-farm data access, could offer solutions at some point.

Greater inaccessibility also increases the costs of NASS surveys. Producer cooperation in responding to surveys as quickly as possible would go a long way to reducing the cost of data collection by decreasing the need for repeated contacts. In order to achieve the highest possible response rates, NASS surveys are conducted first by Internet and mail contact, and then followed up with telephone and personal interviews. The cost of these contacts increases substantially at each stage, from $2 per respondent for the Internet survey, $4 for mail, $12 for telephone, and more than $50 per respondent for personal interview. So the more responses at the earliest stages of the survey, the more cost effective the collection of the needed data.

Response Rates and County Estimates

Since the 2014 Farm Bill, sufficient response rates on NASS surveys for crop planted acres, harvested acres, yield, and production to support county-level estimates can have an even more direct impact on many producers. The 2014 Farm Bill replaced commodity programs that provided direct payments to farmer regardless of adverse conditions with programs that trigger when a safety net is needed to offset revenue declines (e.g., farmdoc daily, February 20, 2014). One of those, the Agricultural Risk Coverage (ARC) program, relies heavily on NASS county yield estimates to determine if the program will provide payments in a given year on a given commodity and, if so, to what extent.

A majority of producers opted for the ARC-CO program (farmdoc daily, June 16, 2015), which provides farm payments when county crop revenue is less than the ARC-CO guarantee, calculated as 86 percent of the most recent 5-year Olympic average[2] price multiplied by the most recent 5-year Olympic average county yield.[3] While ARC payments have risen as commodity prices have fallen over the past few years, the payments under ARC-CO have not been uniform, by design. Under ARC-CO, the county payment rates per base acre will vary geographically based on the county-level yields in a given year. An integral part of administering the new program requires knowing what county yields were for covered commodities not only for the payment year, but also for the preceding 5-year period.

So, how did USDA determine the yield estimates needed to implement the new ARC-CO program? Roughly 90 percent of yields needed to establish ARC-CO county average yields for all covered commodities were developed from about 41,500 county yield estimates–NASS survey data provided about 28,500 and Risk Management Agency (RMA) crop insurance data supported approximately another 13,000. The remaining 10 percent of ARC-CO county average yields were established using alternative methods as described below.

The NASS estimates are viewed as the gold standard at USDA since they are developed from a statistical framework that surveys large and small farms in an area-weighted probability sample. Because NASS has knowledge of plantings and other information, this approach generally achieves as much accuracy as possible with the least cost possible. That procedure first produces an overall U.S. estimate of yields, which can then be disaggregated by state and county. Basic statistical principles suggest that as one attempts to estimate hundreds of county yields that make up the national yield – the amount of data needed increases dramatically. However, surveying more farmers or surveying the same farmers more frequently would cost more without any certainty that it could resolve the underlying problem of non-response–in fact, it might reduce the response rate further.

Because NASS county yield estimates require sufficient responses to develop a statistically valid estimate, response rates on NASS surveys are getting more attention. NASS must get at least 30 survey responses for a particular crop in a particular county, or it must receive survey responses from at least 3 producers representing a minimum of 25 percent of the county acreage in the particular crop. If those criteria are not met, then NASS will not publish a yield for that crop in that county in order to protect farmer privacy.

When NASS doesn’t get enough responses to publish an estimate, the Farm Service Agency (FSA) of the USDA must rely on other data to develop yield estimates for implementing the ARC program. In those cases, FSA will use RMA yields, when available, but these may differ from the data a NASS estimate would provide. For example, RMA data may not be as representative of the county yield as a NASS statistical sample, since not all farms participate in the crop insurance program.

In the event that there is neither a NASS county estimate nor enough data to estimate an RMA county yield, the FSA State Committee will determine the county yield using best available data, including such possibilities as the NASS or RMA yield for a neighboring county, the NASS district yield estimate, or 70 percent of the transitional yield (or t-yield). NASS districts can include multiple counties, which may make the yield determination too high for some counties and too low for others.

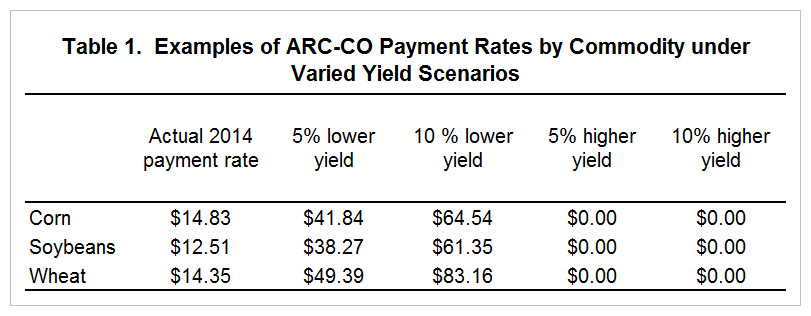

The yield estimates used to calculate ARC-CO payment rates determine the level of payments producers with base acres will receive for that year. A relatively small change in the yield estimate for a county can have a substantial effect on the payment rate, in part due to the tight range (10 percent) around the revenue guarantee that limits ARC-CO payments. Looking at an example county payment rate for corn (Table 1), a 5 percent decrease in yield leads to a 182 percent increase in the payment rate; a 10 percent decrease leads to a 335 percent increase in payment rate. The effects are even greater for the soybeans and wheat examples.

Achieving sufficient survey responses to support NASS yield estimates for as many counties as possible offers the best chance for ensuring that county yield estimates employed in determining ARC-CO payment rates are as accurate and comparable nationwide as possible. The accuracy of ARC county yields based on NASS data would clearly be enhanced by higher response rates. The risk protection of ARC is also dependent on the correlation of the payment with actual farm losses. ARC replaced the earlier ACRE program in part so that yields would be from ‘closer to home.’ Without county data the ARC payments are increasingly disconnected from the target county. Using data from larger areas increases the odds that payments do not trigger when they are needed by the farm.

Implications

The response rate to USDA-NASS acreage and yield surveys have fallen precipitously in recent years in parallel with the experience of other government and academic surveys (Meyer et al., 2015; Anseel et al., 2010). A number of potential solutions have been proposed over time both to increase responses and to work around missing data. Some research suggests that tailoring survey approaches to differing audiences within the survey population could improve response rates (Anseel et al., 2010). Other data sources like remote sensing, weather data, modeling, machine data, or integrated datasets may also be useful in providing additional information. NASS already makes use of some of these other data sources and methods in developing estimates, but as a supplement, not a replacement, for survey data. Further use of such sources is costly. For now, the best approach remains encouraging greater producer response. It is also important to note that NASS surveys capture other valuable information. For example, the ARMS survey provides important insights into farm financial situations. NASS surveys also help in understanding technology adoption and prices received. All this information is reported freely and is available for all producers.

The value of and need for responses may best be tied back to why USDA was asked to produce such reports many decades ago–to assure the availability of information on the agriculture sector to all participants. The fact that USDA reports its estimates freely means that both buyers and sellers can have equal information about the supply and demand of a crop. Such information may come directly through USDA’s own reports, but often reaches users through news and information sources that depend on USDA reports to inform their clients and customers. In a market without this free information, large firms might well be able to invest in market intelligence that small firms and farms would not have available. Voluntary participation in surveys that gather such information is essential for USDA continuing its role as an objective unbiased provider of market intelligence and is critical for accuracy in design and implementation of farm policies.

Notes

[1] Another third (34%) of non-responses were accounted for as “refused but no reason given.” [2] A 5-year Olympic average drops the highest and lowest years and takes the average of the remaining three. [3] The total payment per acre may not exceed 10 percent of the ARC-CO guarantee.

References

Anseel, F., F. Lievens, E. Schollaert, and B. Choragwicka (2010) "Response Rates in Organizational Science, 1995-2008: A Meta-analytic Review and Guidelines for Survey Researchers," Journal of Business Psychology 25:335-349.

Coppess, J., and N. Paulson. "Agriculture Risk Coverage and Price Loss Coverage in the 2014 Farm Bill." farmdoc daily (4):32, Department of Agricultural and Consumer Economics, University of Illinois at Urbana-Champaign, February 20, 2014.

Lusk, J. (2013), "From Farm Income to Food Consumption: Valuing USDA Data Products," C-FARE report No. 0114-299, Washington, DC (October; available at http://www.cfare.org/events/c-fare-events/2013/seminar-to-elucidate-the-value-of-usda-data).

McCarthy, J.S. and D.G. Beckler (2000) "Survey Burden and its Impact on Attitudes Toward the Survey Sponsor," U.S. Department of Agriculture, National Agricultural Statistics Service, Research Report, Washington, DC (available online at http://ageconsearch.umn.edu//handle/235077)

McCarthy, J.S., D.G. Beckler, and S.M. Qualey (2006) "An Analysis of the Relationship Between Survey Burden and Nonresponse: If We Bother Them More, Are They Less Cooperative?" Journal of Official Statistics 22(1): 97-112.

Meyer, B.D., W.K.C. Mok, and J.X. Sullivan. (2015) "Household Surveys in Crisis," Journal of Economic Perspectives 29(4):199-226

Ridolfo, H., J. Boone, and N. Dickey (2013) "Will They Answer the Phone if They Know It's Us? Using Caller ID to Improve Response Rates," U.S. Department of Agriculture, National Agricultural Statistics Service, Research Report No. RDD-13-01, Washington, DC (August; available at https://www.nass.usda.gov/Education_and_Outreach/Reports_Presentations_and_Conferences/reports/Area%20Code%20Report1-27-14.pdf).

Schnitkey, G., J. Coppess, N. Paulson, and C. Zulauf. "Perspectives on Commodity Program Choices under the 2014 Farm Bill."farmdoc daily (5):111, Department of Agricultural and Consumer Economics, University of Illinois at Urbana-Champaign, June 16, 2015.

Tran, H.N., M.W. Gerling, M. Mitchell, and T.P. O'Connor. (2011). Data Tables: Reasons for Nonresponse in the 2009 June Area Survey. USDA, NASS, RDD Research Report #RDD-10-04A (available online at https://www.nass.usda.gov/Education_and_Outreach/Reports,_Presentations_and_Conferences/reports/2009_jas_tables_report_april_12_2011.pdf.

USDA-NASS (2015) "2015 CAPS Row Crops Enumerator Training," online training module (November; available at http://www.nasda.org/File.aspx?id=38368).

Disclaimer: We request all readers, electronic media and others follow our citation guidelines when re-posting articles from farmdoc daily. Guidelines are available here. The farmdoc daily website falls under University of Illinois copyright and intellectual property rights. For a detailed statement, please see the University of Illinois Copyright Information and Policies here.